- An obvious extension of the secant method is to use three points at a time instead of two. Suppose we begin with two approximations, x 0 and x 1 to a root of f(x) = 0 and that the secant method is used to compute a third approximation x 2.Instead of discarding x 0 or x 1 we may construct the unique (quadratic) interpolating polynomial p 2 for f at all three points.

- The secant method has a order of convergence between 1 and 2. To discover it we need to modify the code so that it remembers all the approximations. The following code, is Newton's method but it remembers all the iterations in the list x. We use x(1) for (x1) and similarly x(n) for (xn).

Fixed-point iteration Method for Solving non-linea. Secant Method for Solving non-linear equations in. Newton-Raphson Method for Solving non-linear equat. Unimpressed face in MATLAB(mfile) Bisection Method for Solving non-linear equations. Gauss-Seidel method using MATLAB(mfile) Jacobi method to solve equation using MATLAB(mfile.

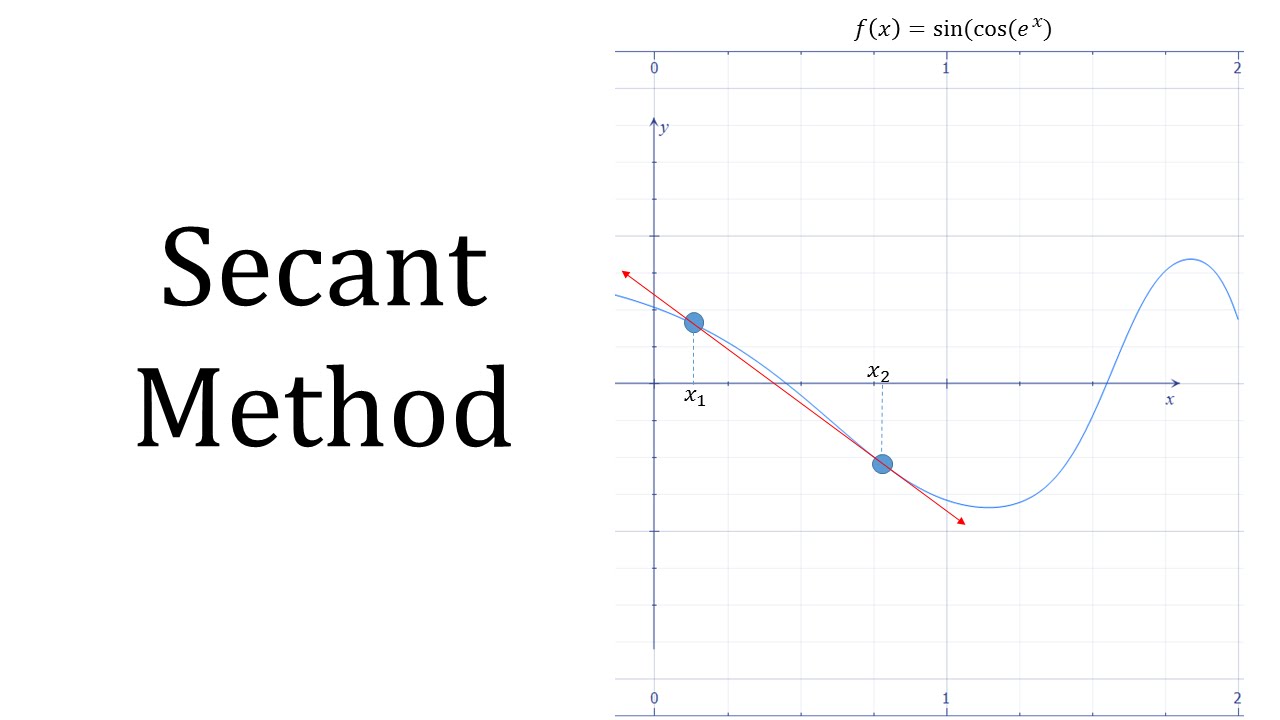

In numerical analysis, the secant method is a root-finding algorithm that uses a succession of roots of secant lines to better approximate a root of a functionf. The secant method can be thought of as a finite-difference approximation of Newton's method. However, the method was developed independently of Newton's method and predates it by over 3000 years.[1]

The method[edit]

Fedora project windows virtio drivers. The secant method is defined by the recurrence relation

As can be seen from the recurrence relation, the secant method requires two initial values, x0 and x1, which should ideally be chosen to lie close to the root. Best free typing lessons downloads.

Derivation of the method[edit]

Starting with initial values x0 and x1, we construct a line through the points (x0, f(x0)) and (x1, f(x1)), as shown in the picture above. In slope–intercept form, the equation of this line is

The root of this linear function, that is the value of x such that y = 0 is

We then use this new value of x as x2 and repeat the process, using x1 and x2 instead of x0 and x1. We continue this process, solving for x3, x4, etc., until we reach a sufficiently high level of precision (a sufficiently small difference between xn and xn−1):

Convergence[edit]

The iterates of the secant method converge to a root of , if the initial values and are sufficiently close to the root. The order of convergence is φ, where

is the golden ratio. In particular, the convergence is superlinear, but not quite quadratic.

This result only holds under some technical conditions, namely that be twice continuously differentiable and the root in question be simple (i.e., with multiplicity 1).

If the initial values are not close enough to the root, then there is no guarantee that the secant method converges. There is no general definition of 'close enough', but the criterion has to do with how 'wiggly' the function is on the interval . For example, if is differentiable on that interval and there is a point where on the interval, then the algorithm may not converge.

Comparison with other root-finding methods[edit]

The secant method does not require that the root remain bracketed, like the bisection method does, and hence it does not always converge. The false position method (or regula falsi) uses the same formula as the secant method. However, it does not apply the formula on and , like the secant method, but on and on the last iterate such that and have a different sign. This means that the false position method always converges.

The recurrence formula of the secant method can be derived from the formula for Newton's method

by using the finite-difference approximation

The secant method can be interpreted as a method in which the derivative is replaced by an approximation and is thus a quasi-Newton method.

If we compare Newton's method with the secant method, we see that Newton's method converges faster (order 2 against φ ≈ 1.6). However, Newton's method requires the evaluation of both and its derivative at every step, while the secant method only requires the evaluation of . Therefore, the secant method may occasionally be faster in practice. For instance, if we assume that evaluating takes as much time as evaluating its derivative and we neglect all other costs, we can do two steps of the secant method (decreasing the logarithm of the error by a factor φ2 ≈ 2.6) for the same cost as one step of Newton's method (decreasing the logarithm of the error by a factor 2), so the secant method is faster. If, however, we consider parallel processing for the evaluation of the derivative, Newton's method proves its worth, being faster in time, though still spending more steps.

Generalizations[edit]

Broyden's method is a generalization of the secant method to more than one dimension.

The following graph shows the function f in red and the last secant line in bold blue. In the graph, the x intercept of the secant line seems to be a good approximation of the root of f.

Computational example[edit]

Below, the secant method is implementation in the Python programming language.

It is then applied to find a root of the function f(x) = x2 − 612 with initial points and

Notes[edit]

- ^Papakonstantinou, J., The Historical Development of the Secant Method in 1-D, retrieved 2011-06-29

See also[edit]

References[edit]

- Kaw, Autar; Kalu, Egwu (2008), Numerical Methods with Applications (1st ed.).

- Allen, Myron B.; Isaacson, Eli L. (1998). Numerical analysis for applied science. John Wiley & Sons. pp. 188–195. ISBN978-0-471-55266-6.

Secant Method Algorithm

External links[edit]

Secant Method Pdf Online

- Secant Method Notes, PPT, Mathcad, Maple, Mathematica, Matlab at Holistic Numerical Methods Institute

- Weisstein, Eric W.'Secant Method'. MathWorld.